Australian and New Zealand based CIOs, are caught between a rock and a hard place. The board expects us to accelerate digital transformation and slash technical debt, but we’re doing it amidst a severe local IT skills shortage and under some of the strictest data privacy and cybersecurity regulations globally.

For most of us, legacy codebase transformation and the associated technical debt is an anchor dragging down the ability to transform. However the rapid maturation of generative AI gives us a serious lever to pull. Aviato have seen firsthand how AI assisted legacy code refactoring can drastically cut down delivery times.

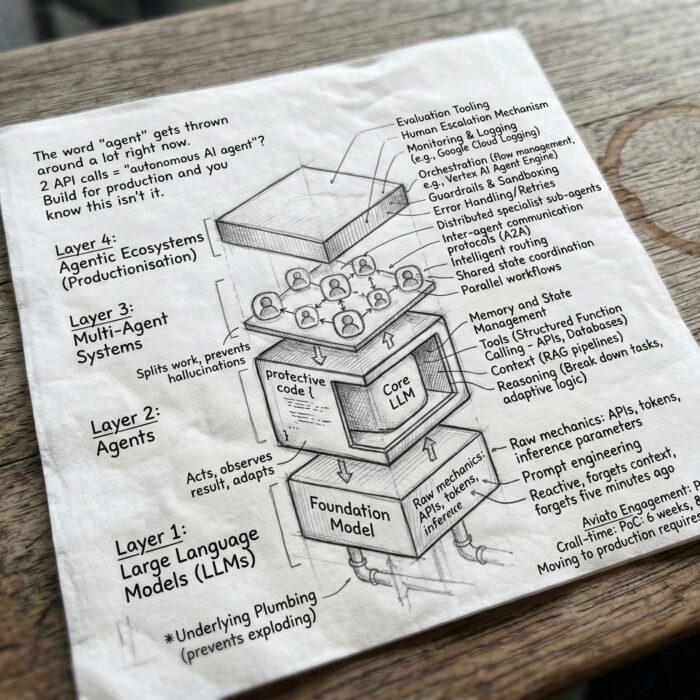

Without the right guardrails in place, we’re just speeding up the rate at which we introduce novel security vulnerabilities, compliance risks and increase our technical debt. Here is how we approach mapping governance, security controls, and compliance steps so we can leverage AI for secure code modernization while keeping security and the risk committee happy.

1. Facing Our Unique Australian Local Challenges

Before we let our engineering teams loose with AI tools, we have to look closely at the Australian CIO challenges we deal with daily. We can’t adopt AI with a Silicon Valley “move fast and break things” mindset. We have to balance speed against:

- Ruthless Regulatory Scrutiny: We have the Privacy Act, the SOCI Act, and if you’re in finance, APRA’s CPS 234 breathing down your neck.

- IP and Data Leakage: The absolute nightmare scenario of a developer pasting proprietary code or unmasked customer data into a public, consumer-grade AI model to get a quick fix.

- The “Confident Junior” Problem: AI models will sometimes hallucinate or write code that looks beautiful but hides deep architectural flaws.

- Residency: Most AI models are running offshore, do your policies allow code to be transmited outside the borders of Australia or New Zealand?

- For or With? Consultants (Including Aviato) can refactor for you, but you might need the internal capability to comply with regulations, Aviato can also do it with your team to build capability.

To survive this, our adoption of AI can’t be a grassroots, developer led free-for-all. It demands top-down, governance and control.

2. Laying Down the Law: Governance for AI in Software Development

We’ve learned that effective governance and security controls for AI in software development are about giving teams a safe paved road to drive on.

- Draw a Line with an Acceptable Use Policy (AUP): I explicitly ban the use of unauthorized, public AI assistants for company code. We only use enterprise grade AI tools (like Gemini) where we have a watertight contract stating our codebase won’t be used to train external models.

- Enforce the Human in the Loop (HITL): I tell my teams to treat AI like a highly capable, extremely fast, but ultimately unproven junior developer. The AI can suggest the refactored code, but a senior engineer must review, test, and own that commit.

- Lock Down the Tooling: We restrict AI refactoring to specific, approved languages and frameworks. The AI needs to be operating within our specific architectural guidelines, not inventing new ones on the fly.

3. Shifting Left: Security Controls for Modernization

AI generated code isn’t inherently secure in fact, it often regurgitates the insecure patterns it was trained on. To achieve true secure code modernization, our security controls have to shift even further left.

- Zero Trust Data Masking: Before we let an AI tool analyze a monolithic legacy system, we run automated scripts to strip out the hardcoded credentials, API keys, and PII that we all know are buried in there from ten years ago.

- Pipeline Scanning: Static and Dynamic Application Security Testing (SAST/DAST) in the CI/CD pipeline is mandatory. Every line of refactored code gets scanned for OWASP Top 10 vulnerabilities before it ever gets near a merge.

- Strict Dependency Tracking (SBOM): AI loves to suggest importing open source libraries to replace our clunky legacy logic. That’s fine, but we maintain a strict Software Bill of Materials (SBOM) to track these new dependencies and monitor them for supply chain vulnerabilities.

4. Translating AI to the Risk Committee: Compliance Management

“The AI wrote it” isn’t an acceptable excuse for a breach. Effective compliance risk management means mapping our technical controls directly to our local obligations. Here’s the cheat sheet I use:

| The Regulator / Standard | The AI Refactoring Risk | How We Control It |

|---|---|---|

| Privacy Act (OAIC) | Accidental exposure of PII embedded in legacy databases to external AI vendors. | Mandatory local data masking; strict use of enterprise AI tiers with zero retention clauses. |

| APRA CPS 234 | Introduction of unauthorized or poorly tested code changes that threaten infosec. | Enforced HITL peer reviews; strict role-based access control (RBAC) in our CI/CD pipelines. |

| SOCI Act | Supply chain attacks introduced via AI hallucinated or vulnerable third-party libraries. | Automated SBOM generation; continuous vulnerability scanning of all dependencies. |

| ASD Essential Eight | Circumventing application control or introducing unpatched vulnerabilities. | Rigorous penetration testing on refactored apps; restricting AI tool access to authorized personnel only. |

5. My Advice: Don’t Boil the Ocean

If you want to reduce delivery time without spiking your risk profile, do not go for a “big bang” rollout. Here is the phased approach that works for us:

- Run a Quiet Pilot: We start with a low risk application. We build an AI Pipeline to refactor and a small, trusted squad of senior developers to review each pull request.

- Baseline the Metrics: We tracked deployment frequency, lead time, and security defect rates, comparing them against our manual refactoring baselines.

- Fix the Frictions: The pilot will expose the gaps in your CI/CD pipeline and your AUP. Fix them before you scale.

- Train Before You Scale: When we finally rolled the tools out broadly, it was accompanied by mandatory training on recognizing AI hallucinations and “prompt engineering for security.”

Transforming a legacy codebase doesn’t have to be a multi year, high risk nightmare anymore. Aviato Consulting can do the whole thing for you, or work with you to build internal capability.

By wrapping our AI tools in robust governance, localized compliance mapping, and strict security controls, we can safely clear our technical debt and finally give our engineering teams the velocity they and the business are asking for.

See Also: Aviato App Development or AI & ML