The hype around Artificial Intelligence has moved from the theoretical to the tangible. With businesses moving more of their experiments towards production use cases. While media reports of 90% AI project failure may be exaggerated, a significant number of promising initiatives still falter before reaching production. My experience helping large enterprises shows this isn’t due to a lack of enthusiasm, funding or technology, but a failure to build the necessary strategic scaffolding. While I have seen much smaller numbers than 90% of these projects fail, we are seeing a few too many. Some projects finish on time, and budget, and deliver what was asked but not move into the production phase. The challenges we have identified are not a lack of funding, ambition or technology, it’s a failure to build the necessary strategic and technical scaffolding required to productionise AI. Moving from isolated pilot projects to scaled, AI is a journey and the people, and change aspects need to be well planned out. Scaling AI successfully has a number of prerequisites: Programme & Change Management When Cloud became a hot topic, large programmes of work were spun up with governance teams, and PMO offices, and migration were segmented into the 7 R’s, and then split further into waves. AI is going to be substantially more transformative than moving workloads to the cloud, and the impact on people is going to be far greater, but little thought has been given to the programme and change management aspects. This requires a fundamental shift in thinking from treating AI as a series of disjointed tech experiments to embedding it as a core, strategic capability encompassing the tech teams, change teams, and spinning up the required PMO office and developing a strategy to manage the change that incorporates your people. Cloud Foundations For our clients, this often means building out a proper resource hierarchy in the cloud, defining identity and access management protocols, implementing robust security controls, and establishing clear cost governance mechanisms. This gives everybody assurance that data will not be leaked, or used to train models on proprietary data, as well as being foundation for giving the right people the right access. One thing that is still unclear to execs is what the infrastructure cost of AI is going to be, in our experience it is often magnitudes cheaper than exec’s are estimating, but those savings are quickly swallowed up by the required change management. Use Case Identification A focus on solving critical business problems is something that technologies forget about. Aviato are sure that a disciplined framework for identifying and prioritizing use cases is what separates the projects that realise enterprise value from those that end up being scrapped. This does not need to be complex, and a bottom up approach seems to get the most traction, the employees on the coal face are acutely aware of what parts of their jobs they want to automate away. What we have seen work: Broad or Deep One area where expectations are often misaligned is around what the AI Implementation is supposed to do, Google Agentspace, or Glean is one example of a broad enterprise wide AI implementation that is familiar, it will summarise all of your companies knowledge and provide a Chat interface similar to Chat GPT or Gemini but trained on your company data. These agents are great at saving time for a broad number of use cases but are very unlikely to take autonomous actions. The other hand we have the deep agents, these are very specific, they will help Peter from the legal team review contracts faster, or Mary from security find security details in logs, these agents will more likely take autonomous actions, and be very specific to a role. When implementing a broad AI platform, a lot of people are expecting something that will take autonomous actions, and while Google’s Agentspace has a very exciting roadmap it is just not at the level of doing this deep work yet. Centralised Framework Once you have a production AI agent the job is not over, you now need a way to manage this, and bring a structured approach to optimising it. What happens when OpenAI or Google release a new model, do you switch immediately? Do the benchmarks they provide match your use case? In the same way as we run software updates for the newest version of Java, a structured approach to life cycling system prompts and agents is required, and validating these against metrics that matter to your use case, latency is key for a chat bot, accuracy is key for a software engineering agent, and cost is a consideration to all use cases. Google is definitely leading the way with their Vertex AI Evaluation tooling, but skipping over this and “YOLOing” changes to production agents is a problem waiting to happen. Conclusion Aviato are sure the future of the professional workforce is a partnership between humans and AI agents. However, this future won’t arrive by accident. It must be built with a disciplined, structured approach that treats AI not as a series of tech experiments, but as part of the core business strategy, and run as a transformation project.

AI

This is where we keep all the posts related to Artificial Intelligence and Machine Learning, from using LLMs to summarise, to generating video content this is the one stop shop for Aviato AI and ML content.

Deploying ADK Agents to Agentspace

This post outlines the steps required to deploy an Agent Development Kit (ADK) Agent from Agent Engine to Agentspace. Hopefully Google publish some docs on how to do this and thanks to Andy Hood for figuring this out. If you do need help with this reach out via https://aviato.consulting Follow these instructions carefully to ensure a successful deployment. Note Both Agent Engine and AgentSpace have been recently renamed as part of Google’s AI branding, so you will still see references in the APIs to their previous names: Prerequisites Before beginning the deployment process, ensure you have the following: Instructions for developing and deploying the ADK Agent are outside of the scope of this document. Deployment Steps The deployment process involves several key steps: Step 1: ADK Agent deployed to Agent Engine LOCATION=us-central1PROJECT_ID=aviato-project-idTOKEN=$(gcloud auth print-access-token)curl -X GET “https://$LOCATION-aiplatform.googleapis.com/v1/projects/$PROJECT_ID/locations/$LOCATION/reasoningEngines” \ – header “Authorization: Bearer $TOKEN”Response (truncated):{“reasoningEngines”: [{“name”: “projects/123456789/locations/us-central1/reasoningEngines/123456789″,”displayName”: “ADK Short Bot”,”spec”: {…} Note: when obtaining the ID via the Google Cloud Console, the ID may have the Project Id in the resource name. The AgentSpace API appears to require the Project Number instead. Step 2: Obtain the Id of your AgentSpace application Option 1: Use the AgentSpace menu in the Google Cloud Console to obtain the ID of your AgentSpace application: Option 2: Use the AgentSpace List Engines REST API to list the current AgentSpace applications in your project: # Note AgentSpace currently only supports the global, us and eu multi-regionsLOCATION=globalPROJECT_ID=aviato-project-idTOKEN=$(gcloud auth print-access-token)curl -X GET “https://discoveryengine.googleapis.com/v1alpha/projects/$PROJECT_ID/locations/$LOCATION/collections/default_collection/engines” \ – header “Authorization: Bearer $TOKEN” \ – header “x-goog-user-project: $PROJECT_ID”Response (truncated):{“engines”: [{“name”: “projects/123456789/locations/global/collections/default_collection/engines/agentspace-andy_123456789″,”displayName”: “Agentspace – Andy”,”createTime”: “2025–06–05T22:55:44.459263Z”,…} Important Notes Step 3: Publish the Agent Engine ADK Agent to AgentSpace application The below requires the Project Number, e.g. 123456789, instead of the Project Id, e.g. aviato-project. To obtain the project number use: gcloud projects describe PROJECT_ID Use the AgentSpace Create Agent REST API to publish your Agent Engine ADK Agent to your AgentSpace application. In the body of the POST request, ensure that you replace the reasoningEngine name with the ID returned in Step 1. # Note AgentSpace currently only supports the global, us and eu multi-regionsLOCATION=globalPROJECT_ID=aviato-project-idTOKEN=$(gcloud auth print-access-token)# Use the AgentSpace Application ID returned in the previous stepAGENTSPACE_ID=agentspace-andy_1749164028618curl -X POST “https://discoveryengine.googleapis.com/v1alpha/projects/$PROJECT_ID/locations/$LOCATION/collections/default_collection/engines/$AGENTSPACE_ID/assistants/default_assistant/agents” \ – header “Authorization: Bearer $TOKEN” \ – header “x-goog-user-project: $PROJECT_ID” \ – data ‘{“displayName”: “My ADK Agent”,”description”: “Description of the ADK Agent”,”adkAgentDefinition”: {“tool_settings”: {“tool_description”: “Tool Description”},”provisionedReasoningEngine”: {“reasoningEngine”: “projects/123456789/locations/us-central1/reasoningEngines/123456789”}}}’ Response: {“name”: “projects/123456789/locations/global/collections/default_collection/engines/agentspace-andy_1749164028618/assistants/default_assistant/agents/123456789″,”displayName”: “My ADK Agent”,”description”: “Description of the ADK Agent”,”adkAgentDefinition”: {“toolSettings”: {“toolDescription”: “Tool description”},”provisionedReasoningEngine”: {“reasoningEngine”: “projects/123456789/locations/us-central1/reasoningEngines/123456789″}},”state”: “CONFIGURED”} Important Notes Step 4: Grant Required Permissions In your project, AgentSpace runs under the Google-provided Discovery Engine Service Account with a name such as: service-$PROJECT_NUMBER@gcp-sa-discoveryengine.iam.gserviceaccount.com By default, this service account only has the Discovery Engine Service Agent role. This is insufficient to invoke the Agent Engine ADK Agent and you may get the error “I’m sorry, it seems you are not allowed to perform this operation when invoking your ADK Agent in AgentSpace. If you receive this error, grant the Vertex AI User role to the service account: e.g PROJECT_ID=aviato-project-idDISCOVERY_ENGINE_SA=service-123456789@gcp-sa-discoveryengine.iam.gserviceaccount.comgcloud projects add-iam-policy-binding $PROJECT_ID \ – member=”serviceAccount:$DISCOVERY_ENGINE_SA” \ – role=”roles/aiplatform.user” Troubleshooting If you encounter any issues during deployment: By following these instructions, you should be able to successfully deploy your Agent Engine agent to Google Agentspace.

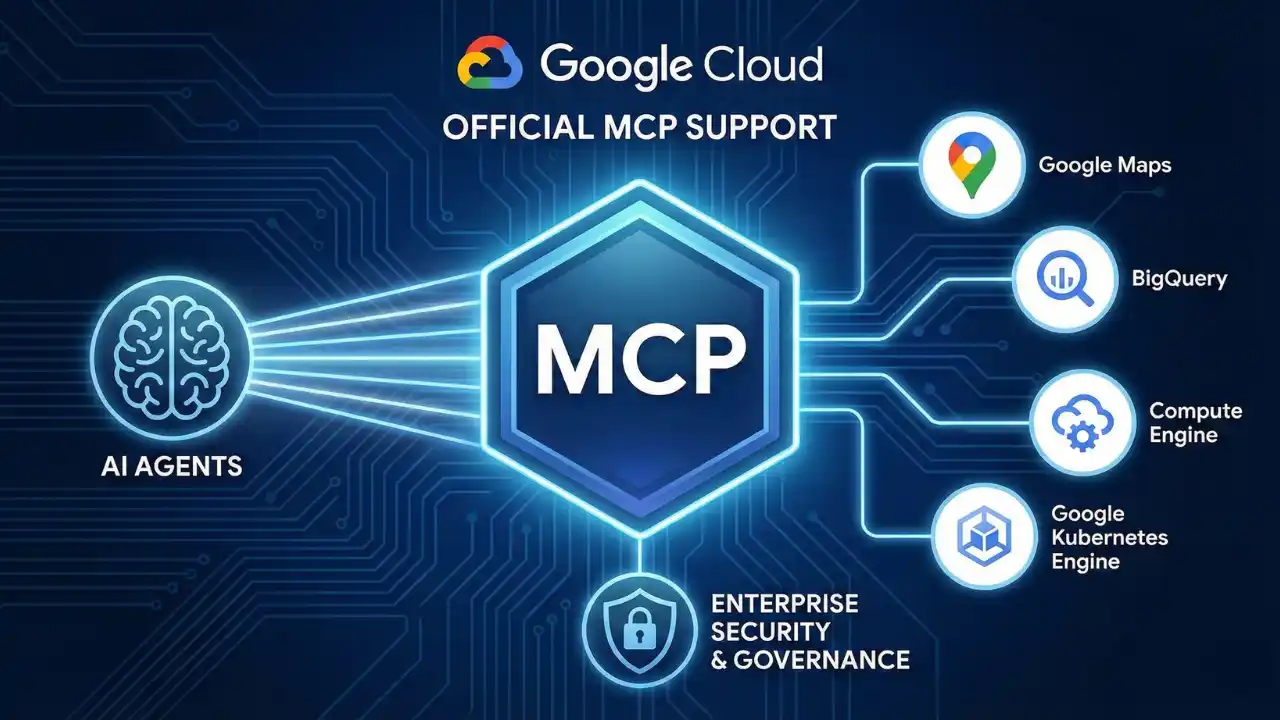

MCP and Agentic AI on Google Cloud Run

We’re have moved from AI that primarily responds via text, to AI that manipulates thinngs. These “agentic AI” systems use tools to do that manipulation. The way they interact with tools is via Model Context Protocol (MCP), this open source standard for helping LLM’s connect and use external data sources, or tools. How and where you run these tools is the purpose of this article, the TL;DR is: “Google Cloud Run”. But read on if you want the details, and points to look out for. Why Cloud Run for Agentic AI? When it comes to deploying these sophisticated agentic AI Google Cloud Run emerges as a great choice, its serverless nature is super scalable, cost efficient, and requires no ops team to keep it running. Aviato often recommend it as part of our AI Deployment Services. Further it can easily connect to any Databases or LLM’s running on Google Vertex AI without leaving your network (VPC). What previously might have required dedicated SRE and DevOps teams can now be tackled by an individual developer, freeing up time to innovate on the actual AI Agent. Architecting Your Agent on Cloud Run So, what does a typical agentic AI architecture on Cloud Run look like? At its core, you’ll have: MCP Servers Cloud Run is a well known pattern for all of this, but with MCP being so new and the focus of this article it might be best to take a step back and explain what Model Context Protocol (MCP) does . MCP directly addresses the inability of an LLM to use tools; it provides a standardized, structured way for systems to expose their capabilities to language models. Here are some examples: Further the use of MCP lets us change our LLM as new ones are released to improve your agents without rebuilding from scratch, a great benefit of using VertexAI we can do this with a line of code. Practical MCP Server Deployment on Cloud Run Here’s a quick overview on how you can get your MCP servers up and running on Cloud Run for non production uses. Deployment from Container Images If your MCP server is already packaged as a container image (perhaps from Docker Hub), deploying it is straightforward. You’ll use the command: gcloud run deploy SERVICE_NAME – image IMAGE_URL – port PORT For instance, deploying a generic MCP container might look like: gcloud run deploy my-mcp-server – image us-docker.pkg.dev/cloudrun/container/mcp – port 3000 Deployment from Source If you are deploying a production use case, this is the recommended approach, if you have the source code for an MCP server (Perhaps from GitHub) you can deploy it directly. Simply clone the repository, navigate into its root directory, and use: gcloud run deploy SERVICE_NAME – source . Cloud Run will handle the building and deployment, or you can work this into a CI/CD pipeline for a more production ready use case. Cloud Run does not support MCP servers that rely on Standard Input/Output (stdio) transport. This constraint implicitly pushes MCP server development towards web-centric, network-addressable services, which aligns better with cloud-native architectures and scalability. Developers should use frameworks like FastMCP (the standard Python SDK wrapper) using transport=”streamable-http” to natively align with Cloud Run’s architecture. State Management Strategies for Agentic AI on Cloud Run Fortunately, Google Cloud provides robust solutions for managing the various types of state your agentic AI systems will require: Short Term Memroy / Caching For data that needs fast access, like session information or frequently accessed data for an agent, connecting your Cloud Run service to Memorystore for Redis is an excellent option.2 Long-term Memory / Persistent Knowledge For storing conversational history, user profiles, or other forms of persistent agent knowledge, Firestore offers a scalable, serverless NoSQL database solution. If your agent deals with structured data or requires the powerful RAG capabilities discussed earlier, Cloud SQL for PostgreSQL or AlloyDB for PostgreSQL are ideal choices or one of the many that work on Google’s Vertex AI RAG Engine. Orchestration Framework Memory Many AI orchestration frameworks, such as LangChain, come with built-in memory modules. For example, LangChain’s ConversationBufferMemory can store conversation history to provide context across multiple turns. These often integrate with external stores for persistence. Table 1: State Management Options for Agentic Systems on Cloud Run Choosing the right state management approach depends heavily on the specific requirement: The Challenge of Stateful MCP Servers As highlighted, MCP servers using Streamable HTTP transport might need to maintain a persistent session context, especially to allow clients to resume interrupted connections. The core challenge here, (as of June 2025), is that many official MCP SDKs lack support for external session persistence, aka storing session state in a dedicated service like Redis. Instead, they often keep the session state in the memory of the server instance. This makes horizontal scaling problematic, if a client’s subsequent request is routed by a load balancer to a different instance from the one that initiated the session, the session context is lost, and the connection will likely fail. This limitation in current MCP SDKs points to a maturity gap in the ecosystem and until SDKs evolve to better support externalized state, designing MCP servers to be stateless is the more resilient cloud native pattern where feasible. Cloud Run Session Affinity to the Rescue? Cloud Run offers a feature called session affinity that can help mitigate this issue. When enabled, Cloud Run uses a session affinity cookie to attempt to route sequential requests from a particular client to the same revision instance. You can enable this with a gcloud command: gcloud run services update SERVICE – session-affinity Or via the Google Cloud Console or YAML config. However, it’s crucial to understand that session affinity on Cloud Run is “best effort”. If the targeted instance is terminated (due to scaling, etc) or becomes overwhelmed (reaching maximum request concurrency, etc), session affinity will be broken, and subsequent requests will be routed to a different instance. So if the in memory state is absolutely critical and irreplaceable, session affinity alone is not

Video Post: AI with BigQuery And SQL

Stop waiting to unlock the power of AI! ? You already know SQL… and that’s ALL you need. Transcript if you have a lot of data stored on Google Cloud for analytics it’s probably going to be stored in B query now everyone’s trying to do Ai and their training models using pre-existing models spending a lot of money on data scientists but I’ve got some great news if you’re using be query be query has be query ml built into it this lets you run AI against your B query data set by using SQL now SQL is the language used by all the people doing queries or database administrators now it’s very simple to use and you probably already have the skills so you don’t need to go and hire expensive data scientists and AI Engineers to gather insights from your data I’m going to break down some of the models that are built into B cre ml to see if these are going to solve business problems for you first one is linear regression this is predicting how much you’ll sell based on past data so if you’re planning stock Staffing trying to run promotions more accurately this is for you it’s built- in can be run with sequel the next one is logistic regression this is sorting things into categories so let’s say you want to sort customers into categories um to see whether they’re going to buy from you again um or if products are faulty putting them into categories saying these products are likely going to be faulty so you can see the use cases for business the next one is K means clustering this finds hidden groups within your customer base so you can Target marketing campaigns towards them the next Matrix factorisation suggesting which customers might be likely to buy an it this is kind of what you’ll see when you get predictive things on websites saying and you might also like to buy X I think we’re up to the fifth one PCA or principal component analysis this is simplifying complex data to find the most important pattern helping you spot Trends and make more informed decisions the last one I’ve got is time series so predicting future sales based on past data helping you predict demand and make sure you have enough stock based on things that have happened in the past so all of these models are already build into big query ml as I said and can be accessed using SQL queries which you likely already have the skills for in your organisation to enabling your organisation to make AI enabled decisions without spending an absolute Fortune if you want help with any of this Reach Out aviato Consulting can help you thanks

Video Post: Google Cloud Vertex AI & Hugging Face

Who Really Owns Your App? Transcript did you know that most app developers in Australia don’t let you own the IP that they develop for you let me break that down for you you pay someone to write an app for you they let you use it licensed to use it commercialize it change it but you don’t actually own the intellectual property now what would happen if someone else had a similar idea to you went to the same app developer they could sell the code that you paid them to develop this other person doubling their money the next day all they need to do is change the colors and logo maybe make a few slight changes and you’re going to have a competitor now the app developers made a ton of cash on this cuz they’ve sold the same thing twice and only done the work once but you’ve got a competitor that’s going to compete with you and I don’t think that’s really fair and that’s not what we do at aviato at aviato if you pay us to develop your app you own the intellectual property we aren’t going to steal it and use it elsewhere and we’re not going to sell it to a competitor a few days after we finish your project so that then you have someone to compete with in the marketplace if you are looking to get an app developed this is really something that you should be checking with the app developer that you’re going to use reach out to us if ou do want to get an app developed Thanks

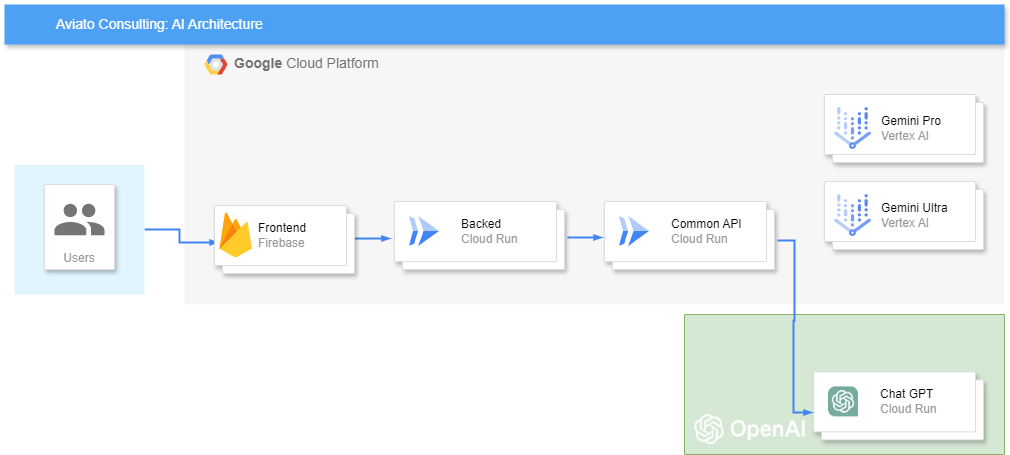

Navigating the AI Maze

The AI landscape is exploding with a dizzying array of models, from the Large Language Models (LLMs) most of us have experimented with like Llama 2 or 3 from Meta, Claude 2 from Anthropic, and Bard (now Gemini) from Google, and the original ChatGPT. Each boasts unique capabilities, from generating different creative text formats to translating languages and answering your questions in an informative way. To make this even more confusing we have models that excel at robotics, tabular regression, image generation, or depth estimation. Choosing The Right Model For Your Business While this abundance offers exciting possibilities, it also presents a significant challenge: choosing the right model for your specific needs, and doing it within your budget. The pricing models for these vary greatly Gemin 1.0 advanced, to 1.5 Ultra is a 10x cost differential. For businesses, this creates an impossible puzzle: how do you select the optimal model without getting lost in the ever evolving AI arms race? And how do you do this within your budget? The answer lies in flexibility. Instead of locking into a single model, businesses need an adaptable infrastructure that allows them to test their business use case against one model, evaluate the performance and then try another, without rebuilding the solution. Additionally as new models are released, testing these to see if there is an uplift to the value of the model quickly, is going to give you the competitive advantage over others that need to rebuild their solution. This ability to quickly swap out models offers several key benefits: Architecture Architecting a solution that works for your business can be easily acheived on Google Cloud with Vertex AI, but this will exclude you from using ChatGPT or other models not avaiable on Huggingface.co LLMs on Google Cloud Vertex AI Google Cloud’s Vertex AI provides the perfect platform for achieving this flexibility. It allows businesses to seamlessly deploy, manage, and experiment with various LLMs through a unified interface. If you are not happy with the 50 or so models they have, you can deploy one from Huggingface.co which has over 600,000 models to choose from. The alternative solution for the non Google customers could be to write a common API, which would give you the flexibility to swap out models, or use Chat GPT which is one model that you cannot find on either Google or Huggingface.co Any LLM With a Common API Either option empowers you to leverage the strengths of different models, test each of them against your unique problems, and stay ahead of the curve in the rapidly evolving AI landscape. Aviato Consulting, a Google Cloud Partner, specializes in helping businesses navigate the complexities of AI and implement flexible LLM solutions on Vertex AI. Our expertise ensures you harness the full potential of AI, maximizing its value for your specific business needs.

Aviato,Your Google Cloud Partner for AI

The AI revolution is here, and businesses that embrace its potential stand to gain a significant competitive edge. However, navigating the complex world of AI can be daunting. That’s where Aviato Consulting, a trusted Google Cloud Partner, steps in to guide your business through a successful AI journey on Google Cloud. Unlocking AI Potential with Aviato and Google Cloud: Why Choose Aviato Consulting: Examples of AI Use Cases Aviato Can Help Implement: Embrace the AI Future with Aviato and Google Cloud: Partner with Aviato Consulting to unlock the transformative power of AI for your business. Leveraging Google Cloud’s advanced AI technologies and Aviato’s expertise, you can gain a competitive edge, optimize your operations, and drive innovation. Let us be your trusted guide on your AI journey.